How do TikTok bans reshape social media regulation?

Expert reviewed

How TikTok bans and social media regulation now affect every platform

TikTok bans and social media regulation now shape how governments think about data flows, recommender systems, youth protection, and opaque personalization across the wider internet. If you are trying to understand where regulation is heading, this article breaks it into five practical shifts: why TikTok became the test case, how algorithmic transparency is moving from theory to enforcement, why the attention economy is under pressure, how digital sovereignty is changing platform rules, and what all of this means for websites and AI systems.

The short version is simple: regulators increasingly treat high-reach platforms as systems that can influence behavior at scale, not just host content. That changes the standard for product design. It also creates a useful warning for brands and site owners. If governments are demanding more transparency and control from social platforms, the same expectations will gradually reach recommendation widgets, tracking-heavy websites, and future AI-driven experiences.

The practical value here is not just political analysis. For teams running independent websites, official company sites, or multilingual brand properties, TikTok's regulatory path signals where trust, content structure, and technical clarity matter more than manipulative growth tactics. That is also why this topic matters for SeekLab.io's audience: the market is moving toward clearer information architecture, better governance signals, and less dependence on black-box engagement tricks.

1. How TikTok bans and social media regulation moved from isolated bans to a policy template

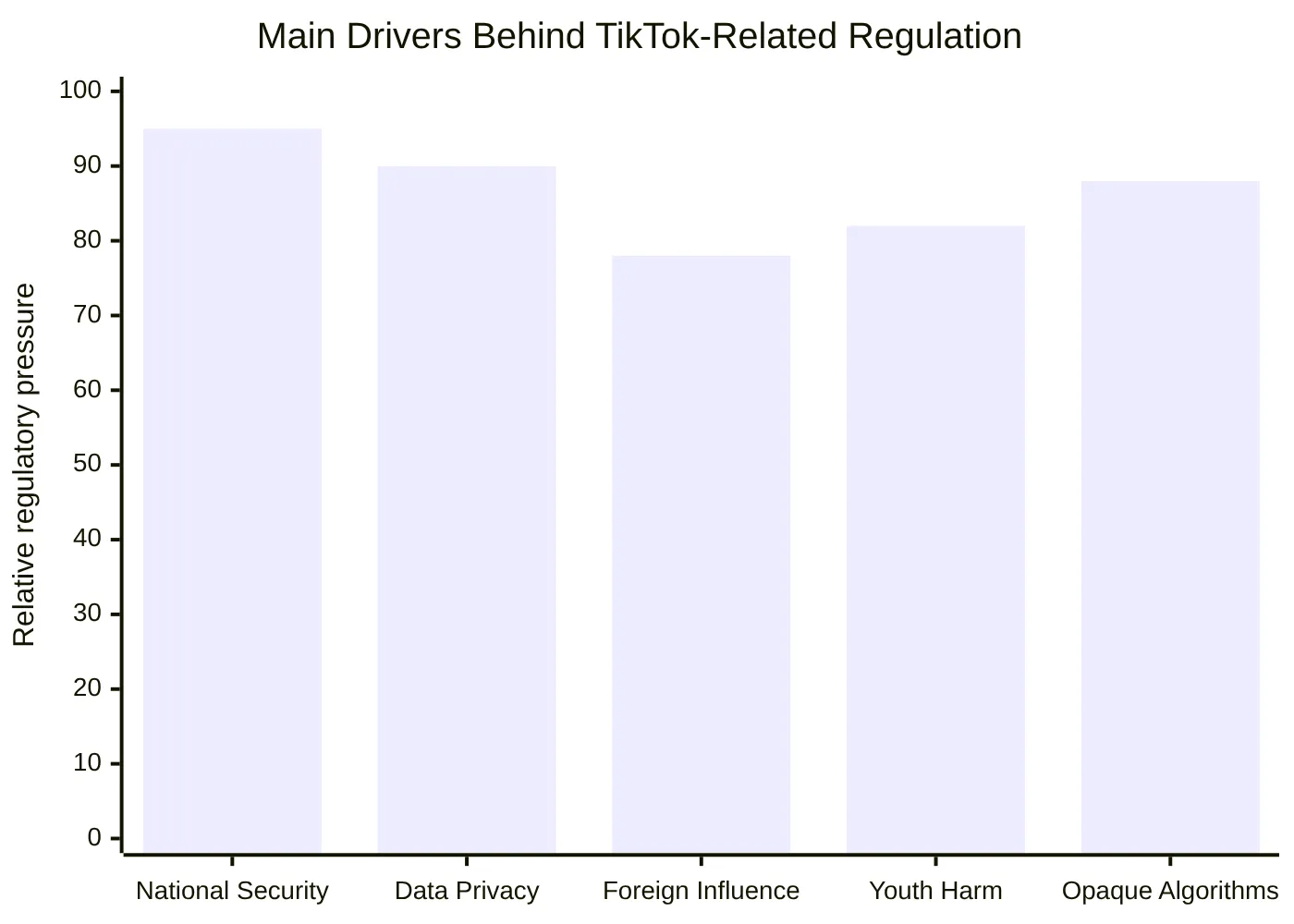

TikTok started as a specific geopolitical concern and became a broader regulatory template. India imposed a full ban in 2020. The US, UK, Canada, New Zealand, EU institutions, and multiple member states moved on government-device restrictions. By early 2026, the research basis for this article points to at least 10 additional countries adopting stronger restrictions or effective bans tied to national security, data privacy, foreign influence, and youth harm concerns.

What matters is not only the number of bans. It is the pattern. Governments are using three escalating models:

| Regulatory path | What it looks like | Why it matters |

|---|---|---|

| Full ban | Consumer access blocked, app store removal, telecom restrictions | Signals the state sees the platform as unacceptable under current ownership or data practices |

| Government-device ban | Public sector devices and networks prohibit the app | Creates a lower-risk first step that can later expand |

| Conditional operation | Divestiture, localized storage, special oversight structures | Turns platform access into a compliance negotiation |

That pattern matters beyond TikTok. Once lawmakers discover they can pressure a platform through app stores, cloud infrastructure, device rules, and data localization requirements, they rarely limit that logic to one company. TikTok simply made the toolkit visible.

A useful source on the ban timeline and US policy progression is Built In's TikTok ban overview. For the US restructuring path and its policy twists, TechPolicy.Press's timeline gives helpful context.

2. Why TikTok bans and social media regulation now center on algorithmic transparency

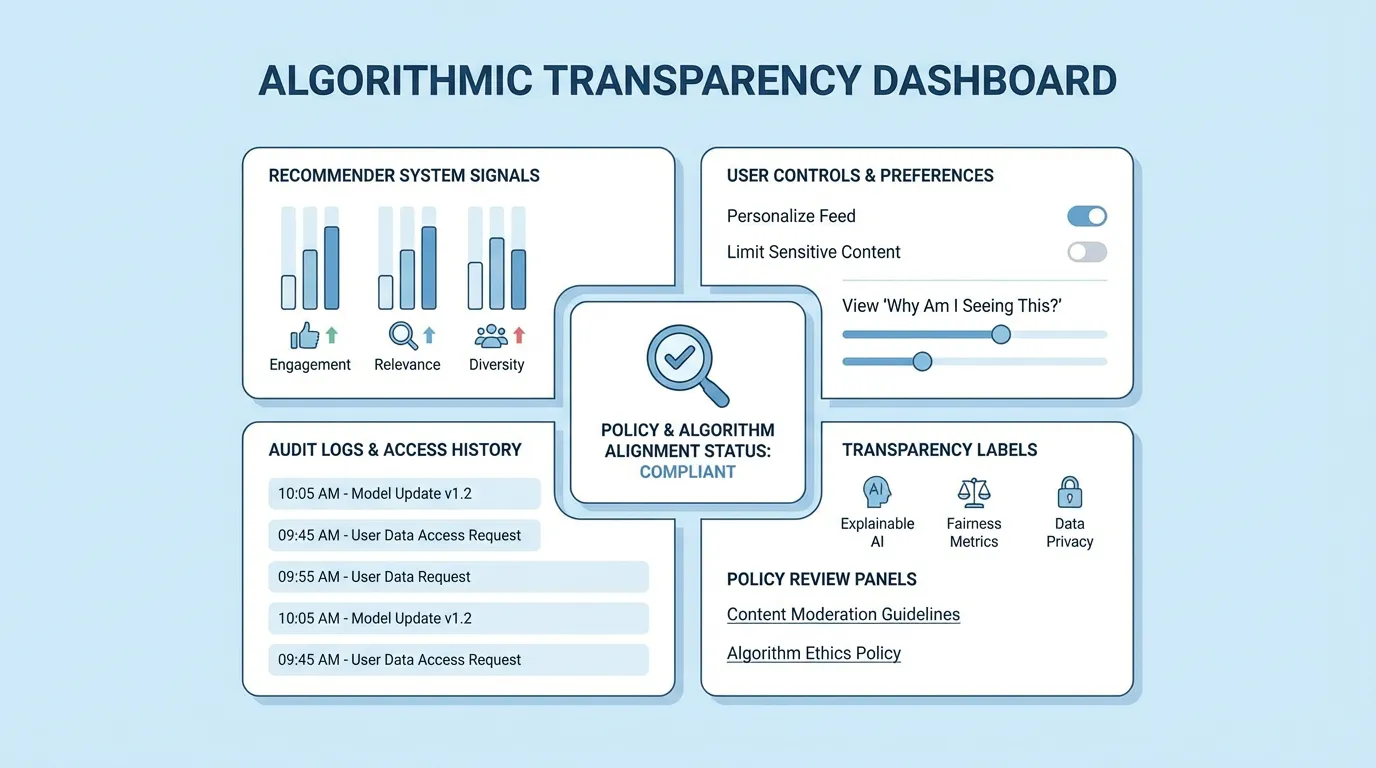

The phrase algorithmic transparency is often used loosely, but the regulatory meaning is becoming more concrete. Governments are not asking platforms to publish every line of code. They are asking them to explain, document, and justify how recommendation systems shape user exposure and behavior.

In practice, algorithmic transparency usually means:

- clear explanations of what signals affect ranking and recommendations

- visible user controls over personalization

- documentation for regulators and vetted researchers

- reporting on system-level risks, not just content moderation outcomes

- non-profiled alternatives where laws require them

The strongest example in the research report is the EU Digital Services Act. Under that framework, recommender systems are no longer treated as private magic. They are treated as auditable systems with legal obligations. You can review the legal basis directly in DSA Article 27 and the broader official explanation in the European Commission's DSA transparency summary.

This is where the conversation gets more interesting for businesses outside social media. A lot of websites now use recommendation blocks, behavioral targeting, on-site search ranking, or personalization layers they barely document internally. That may feel harmless on a midsize B2B site, but the underlying governance question is the same: can you explain what the system is doing, why it is doing it, and how a user can control it?

For teams thinking about SEO and site quality, this regulatory direction overlaps with a familiar operational truth: unclear systems usually create poor outcomes. If your website's content hierarchy, internal links, or personalization logic are difficult to explain, they are usually also difficult for search engines, AI systems, and users to trust. That is one reason SeekLab.io's SEO audit approach emphasizes prioritizing what truly impacts growth instead of piling on opaque fixes.

3. How TikTok bans and social media regulation challenge the attention economy and behavioral manipulation

The deeper issue behind TikTok bans is not only data ownership. It is the design logic of the attention economy. Platforms optimize for watch time, return frequency, interaction, and prediction quality. Once those systems become highly adaptive, they can drift from serving user goals to shaping them.

That is where behavioral manipulation enters the discussion. Regulators and researchers increasingly worry that engagement systems do not simply respond to preferences. They intensify them, narrow them, and sometimes exploit vulnerability.

A few patterns keep appearing in the evidence base:

- short-form feedback loops accelerate content reinforcement

- infinite scroll removes natural stopping points

- micro-signals such as pause time and replay behavior make profiling more sensitive

- youth users are particularly exposed to harmful convergence around identity, self-image, or distress content

- geopolitical narrative amplification can happen subtly through ranking dynamics, not only through explicit misinformation

The research report points to several strong references here, including the NCRI report on TikTok search and narrative amplification, the ISD analysis of transparent recommender systems, and the WebConf 2024 paper on TikTok personalization and filter bubbles.

The regulatory response is moving from "label the risk" to "change the design." That includes pressure for:

- non-profiled feed options

- stronger youth defaults

- limits on dark patterns

- more obvious explanation interfaces

- risk assessments tied to recommender systems

For website operators, the implication is not that every recommendation module is dangerous. It is that manipulative design is becoming a liability. Countdown timers disconnected from reality, fake urgency, intrusive popups that interrupt reading, and recommendation logic that exists only to stretch dwell time all look worse in a market that is becoming more skeptical of optimization theater.

That is also why content strategy is shifting. Brands that explain rather than trap are likely to age better than brands that over-engineer engagement. If you want a related view on building content around real authority instead of scattered attention grabs, SeekLab.io's guide to topical authority and content planning is a useful internal reference.

4. How TikTok bans and social media regulation reinforce digital sovereignty

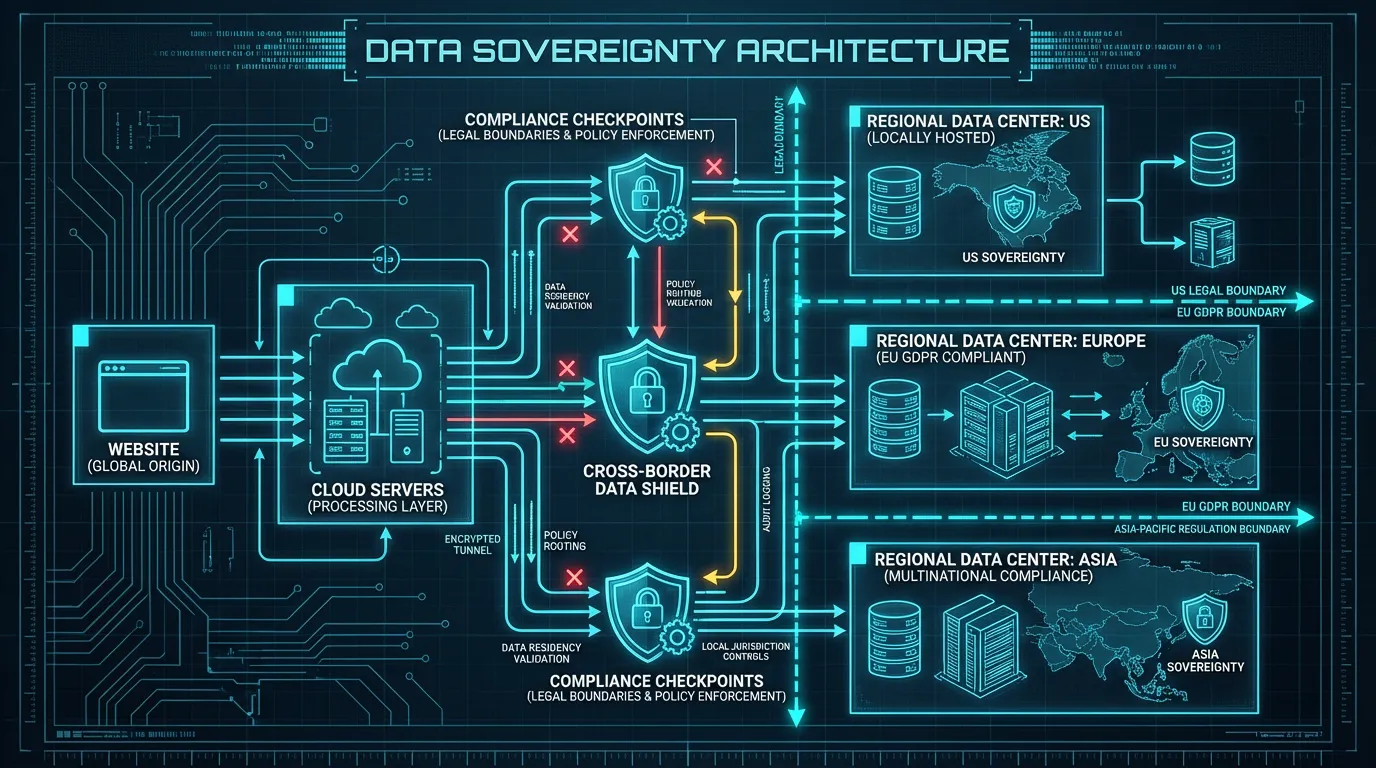

Digital sovereignty used to sound abstract. TikTok made it operational. Governments increasingly want control over where data is stored, which jurisdiction can compel access, and whether core recommendation infrastructure is effectively governed under local law.

The best-known example is Project Texas in the US. It was designed to localize US user data and create tighter oversight around TikTok's operations. Yet even that arrangement drew criticism because data history, cross-border engineering realities, and corporate control questions did not disappear just because storage moved. For that debate, Lawfare's analysis of Project Texas and Business Insider's reporting on US data storage questions help clarify the limits of localization as a complete solution.

This matters for ordinary businesses more than many marketing teams realize. A typical website may use:

- analytics tools

- heatmaps

- consent platforms

- CRM scripts

- ad pixels

- chat widgets

- personalization engines

- multilingual CDN layers

That stack can send user data across jurisdictions in ways the business itself cannot clearly map. In a stricter regulatory environment, that is no longer just a privacy-page detail. It becomes a governance issue, a buyer-trust issue, and sometimes an SEO issue when pages become overloaded with scripts and vague disclosures.

A practical checklist for site owners looks like this:

| Question | Why it matters |

|---|---|

| What user data do we collect? | Many teams cannot answer this precisely across all scripts |

| Where is it stored and processed? | Jurisdiction determines legal exposure |

| Which vendors can access it? | Third-party risk is often larger than first-party risk |

| Do our disclosures match reality? | Many privacy notices lag behind implementation |

| Can we justify each tracker or personalization layer? | Dead scripts create risk without business value |

This is the kind of problem that should be solved with prioritization, not panic. SeekLab.io helps brands build search visibility and AI-era discoverability through high-quality content production and technical optimization, but the useful distinction is this: not every issue matters equally. The goal is to identify what genuinely limits growth, what creates unnecessary risk, and what can wait. For many websites, that starts with a structured crawl, rendering review, internal-link analysis, and content architecture check rather than a random list of plugin changes.

5. What TikTok bans and social media regulation mean for AI systems, SEO, and independent websites

The sideways lesson from TikTok is hard to miss: if regulators are already alarmed by a content feed that predicts and shapes behavior, they will be even less tolerant of autonomous or semi-autonomous AI systems that can act, decide, recommend, and transact.

This is where the topic becomes relevant for AI-era websites and future agent-like experiences. TikTok's "For You" model is not an autonomous agent, but it shares a logic with reinforcement-driven systems: observe behavior, test options, maximize reward, repeat. Once that reward function is engagement, the system can work against a person's reflective goals surprisingly quickly.

That leads to a more useful question for businesses: what kind of digital systems respect cognitive autonomy instead of exploiting it?

A practical framework for websites and AI-enabled experiences:

-

State the purpose clearly

If a page is informational, make it informational. If it is commercial, make that obvious. Hidden intent breaks trust faster than weak design. -

Make recommendation logic legible

"Related by topic", "most recent", and "popular among similar readers" are clearer than black-box suggestions with no explanation. -

Reduce manipulative friction, add protective friction

Remove deceptive obstacles to exit, but add confirmation steps to higher-risk actions. -

Collect only necessary behavioral data

If a feature does not improve user outcomes or business outcomes, it usually does not deserve the tracking cost. -

Build content that machines and humans can both parse

Strong headings, tables, summaries, schema, and coherent internal linking are not just SEO mechanics. They are part of transparent communication.

That last point is where this topic connects directly back to search. Search engines and AI systems increasingly reward clarity. A site that explains its pages, structures its information well, and avoids manipulative clutter is easier to crawl, easier to summarize, and often easier to convert. That is the operating logic behind SeekLab.io's work: improve content structure, information clarity, page architecture, internal linking, and overall site readiness so search engines, AI systems, and real users can understand the site with less friction.

You can see that approach reflected in resources like High-Quality Blog Content Optimization Tips, which focuses on turning content into something easier to index, faster to load, and better at converting instead of simply publishing more.

For marketing managers and developers, the business takeaway is straightforward. The web is moving toward more scrutiny of hidden persuasion systems and more value for transparent, technically sound experiences. That does not mean every company needs a policy department. It does mean your website should be able to answer basic questions clearly:

- what the page is for

- how personalization works

- what data is collected

- where users go next

- why search engines and AI systems should trust the content

A site that cannot answer those questions will struggle more over time, even if its rankings briefly hold.

If you want to turn that kind of regulatory signal into practical action, the smartest first step is usually not a redesign. It is a focused review of what is actually blocking clarity, discoverability, and conversion. SeekLab.io provides that through full-site crawling, structured technical analysis, content planning, multilingual architecture support, and actionable guidance. If you want to sort signal from noise before making changes, you can get a free audit report or contact us to discuss where your site is most exposed or most underleveraged.

Key takeaways on TikTok bans and social media regulation

- TikTok bans and social media regulation now function as a model for broader platform oversight.

- Algorithmic transparency is becoming a legal and operational expectation, not a PR phrase.

- The attention economy is under pressure because engagement-maximizing design often overlaps with behavioral manipulation.

- Digital sovereignty is changing how governments view data localization, platform ownership, and jurisdiction.

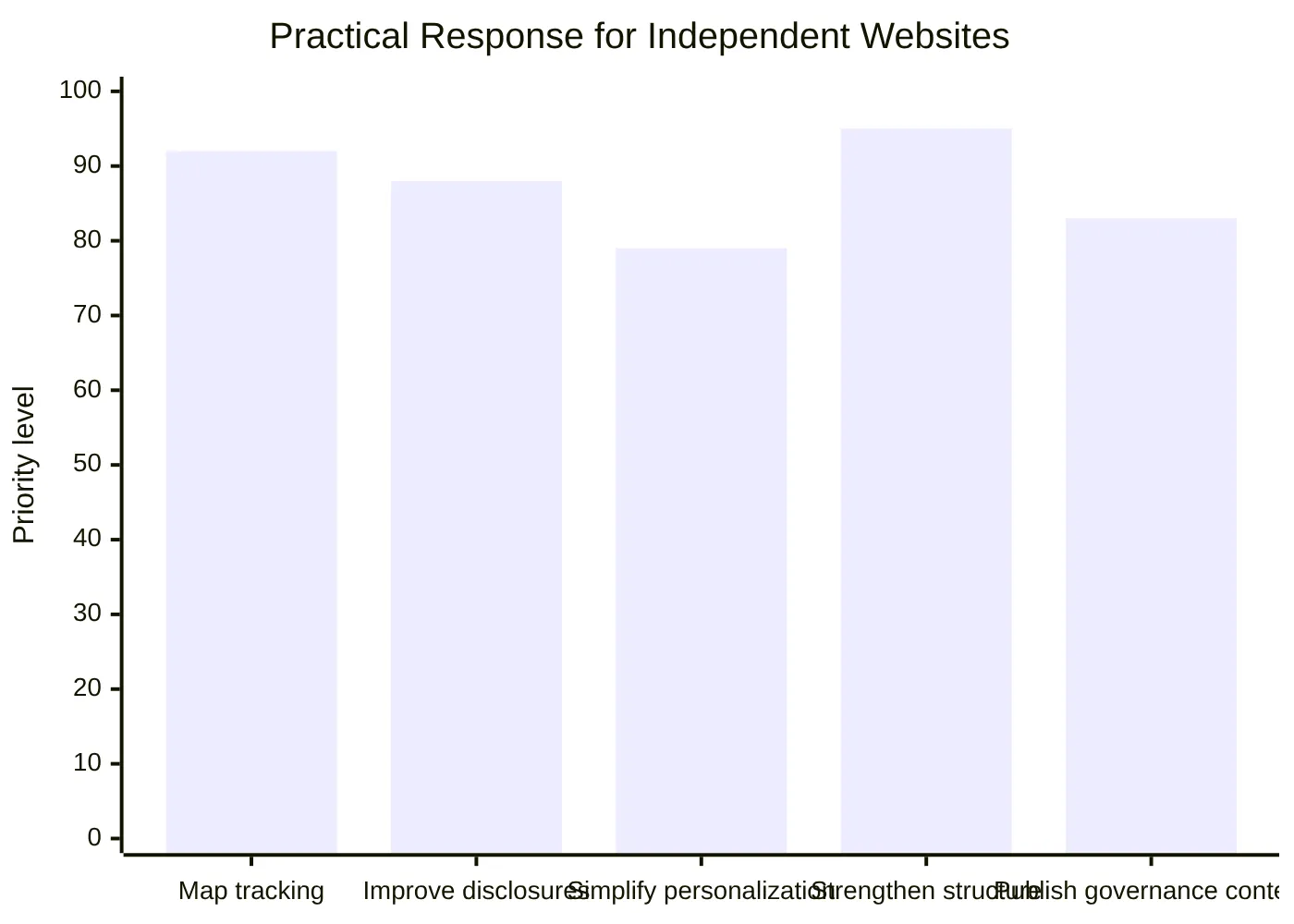

- Independent websites should respond by improving technical clarity, reducing manipulative UX patterns, and building content that supports both SEO and trust.

The companies most likely to benefit from this shift are not the loudest ones. They are the ones with cleaner systems, better explanations, and stronger judgment about what to optimize and what to leave alone.